Research

The work of researchers at DSSC focuses on; Machine Learning Solutions in Computer Vision, Spatio-Temporal Analytics, Record Linkage for multi-source data integration, Natural Language Processing and Brain Science, High Performance Computing, Fog/Edge Computing, Cloud Computing, and Dependable Energy Efficient Systems.

This group focuses on fundamental research topics and real-world applications in computer vision based on a variety of machine learning approaches.

Deep Metric Learning is to learn embedding that can capture semantic similarity information among data, which plays a crucial role in a variety of applications in computer vision. Video Object Detection is one of the fundamental problems in computer vision field and is an essential module for almost all camera based intelligent systems, e.g., surveillance equipment, and also serves as semantic indexing for searching. Domain Adaptation defines problems in which we have well-annotated labels in the source domain but no or only a few labels in the target domain. A novel domain adaptive data augmentation method is proposed by making use of style transfer method and domain discrepancy measurement.

This research effort focuses on research topics over spatio-temporal data processing inspired by various applications.

Representation Learning: Learning representations for complex time series and spatio-temporal data objects in a way that it is adapted for usage in various application-driven scenarios. Anomaly Detection: Identifying anomalous behaviour using spatio-temporal trajectories for applications such as smart cities and crime surveillance. Spatio-Temporal Exploratory Analytics: Using large spatio-temporal datasets to identify regularities across them, for applications such as analytics over team sports and mobility signatures for various groups of people.

Blocking Methods for faster Record Linkage: developing computational light methods for selecting candidate record pairs across multiple heterogeneous data sources in order to reduce the number of computationally expensive comparisons in the linkage phase. Machine Learning based approaches to Record Linkage: development of supervised and unsupervised methods for detecting records across various data sources that refer to the same real world entities (e.g. person). This includes methods for structured data applying different similarity measures for corresponding attributes, and deep learning based approaches for unstructured data.

We develop machine learning methods to process and represent words and sentences in text.

Clinical Narrative Analytics involves building large scale neural network language models of freeform clinical text, enabling dementia diagnosis detection and decision support technologies for healthcare professionals. Computational approaches to fake news we are looking at Medical News and understanding the affective character of Medical Fake News, improving Medical Fake News Detection through inconsistency assessments and combating medical Fake News by developing contextual retrieval methods. Modelling object meaning We combine text and image data to represent the meaning of words and objects in a cognitively plausible way. Zero-shot image identification for a Brain Computer Interface We use a combination of deep neural network models of vision and embedding models of object meaning to retrieve the image a person is viewing, using their brain activation data (EEG).

We investigate runtime scheduling, memory management, compile Time optimisation and related systems software techniques to facilitate the up take of high performance computing and enhance performance.

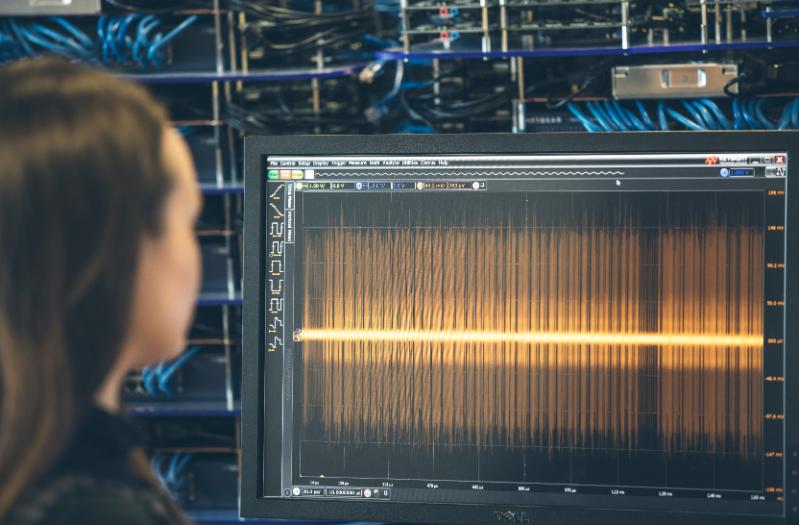

High performance graph processing investigates load balancing, graph partitioning, memory management and parallelism and scheduling for graph processing problems. Specific solutions for non uniform memory architectures (NUMA), memory locality optimisation, and vertex and edge balanced vertex reordering (VEBO) have shown effective. Transprecise computing aims at redefining the overly conservative “precise” abstraction of computing and to replacing it with a more flexible and efficient one, namely transprecision computing. We investigate the application of the transprecision computing concept to problems in scientific computing (performance of linear solvers illustrated in figure) and in machine learning.

We develop novel and scalable architectures and approaches for bringing distant cloud services closer to end-users at the edge of the wired network to make Internet applications more responsive.

Modelling Fog Offloading aims to understand the different approaches that are required to bring services from the cloud to the edge and make efficient decisions, such a set of options on the edge where should services from the cloud be brought to. Fog Computing Benchmarks develops the first open source benchmarks, comprising six applications that allows us to quantify the performance benefits of using the Fog. Edge Node Resource Management develops the first academic prototype to efficiently manage the lifecycle of an application from its deployment on to the edge until its termination. The research enhances our fundamental understanding of the integrated use of the cloud and edge (fog).

We propose frameworks and algorithms for cloud application management and anomaly detection to help cloud users in maintaining Quality of Service ( QoS ) and security aspects of their applications.

We're collaborating with Mechanical Engineering researchers at Queen's in developing a cloud based manufacturing service, which we have termed as Smart Manufacturing as a Service SmartMaaS. Cloud Application Management designs a framework, named MyMinder (Multi objective dYnamic MIgratioN Decision makER ). MyMinder can monitor cloud applications in their post deployment phase and help cloud users in taking dynamic decisions on whether and where (which alternate VM type and/or cloud provider) to migrate applications in case there is QoS violation. Cloud-based manufacturing offers SmartMaaS, aSmart Manufacturing as a Service framework. SmartMaaS delivers cloud-based solution for the manufacturing process that includes design, manufacture, and evaluation using different cloud services. Cloud Anomaly Detection develops efficient and scalable tools for detecting anomalies in both host-level and VM-level resource utilisation in cloud data centres

Our research focuses on modelling the hardware behaviour and designing cross-layer schemes that enable systems to strike the ‘right’ balance between power, performance and reliability/QoS.

System Modelling involves the characterization of the power, performance and reliability of processors and memories and the development of machine-learning based models for predicting the system behaviour under various operating-settings, environmental conditions and executed workloads. Data-Aware Resilient Processing focuses on developing circuit-architecture mechanisms that allow the dynamic adaptation of frequency/voltage based on the type of the executed instructions data. Heterogeneous/Approximate Memory design involves the implementation of heterogeneous memory sub-systems and QoS aware power-reliability management schemes that allow to combine low-power memory chips for storing resilient data with robust memory chips operating at default settings for storing critical data.